How to Build an Agentic AI Recruiting Strategy: A Step-by-Step Adoption Guide for TA Teams

The gap between what enterprise TA teams think they're doing with AI and what they're actually doing is wider than the industry typically acknowledges. Roughly 88% of organizations have adopted some form of AI. Only 11-14% have reached production readiness with governed agentic workflows running in their day-to-day operations. The rest are running experiments, generating scattered wins, and calling it a strategy.

This post is a practical adoption guide for TA leaders who want to move from that first category into the second. It draws directly from the 2026 Agentic TA Operations Blueprint, which is based on third-party research from Gartner, IBM, and Greenhouse, qualitative interviews with talent acquisition leaders at three enterprise organizations, and proprietary data from candidate.fyi's platform.

Summary

Agentic AI systems execute multi-step workflows autonomously, accessing your ATS, calendars, and communication tools without waiting for a human prompt at each stage. Most TA teams are operating at the chatbot level, even when vendors claim otherwise.

Enterprise organizations with formal AI value frameworks see 55% average ROI versus 5.9% for those without.

The clearest ROI in TA is in coordination: agentic scheduling reduces manual scheduling time from 243 minutes to 27 minutes per interview. Successful adoption follows three stages: chatbot-assisted, transitioning to agentic, and fully autonomous.

Teams that skip foundational work at Stage 1 struggle to scale at Stage 3. The governance risks are real, and most TA teams are not planning for them.

Why Agentic AI Is a Separate Category From the Tools Your Team Already Uses

If you've read our breakdown of AI agents versus chatbots, you already know the core distinction: a chatbot completes tasks you initiate; an agent executes goals you set. The practical gap between those two things is significant.

A chatbot drafts a job description when you ask it to. An agent monitors your open requisitions each morning, identifies which roles have pipeline stalls, flags which senior interviewers are approaching their weekly capacity, and delivers a prioritized action list to your recruiting lead in Slack before anyone opens a browser.

Same goal. Fundamentally different amount of human coordination required.

For TA leaders evaluating their current stack, Gartner's projection is worth sitting with: 40% of enterprise applications will embed task-specific AI agents by the end of 2026, up from less than 5% in early 2025. Teams building the foundation now will have a meaningful advantage when that shift arrives.

Where Enterprise TA Teams Actually Stand on AI Adoption Today

The honest picture is that most TA teams are further behind than they believe.

Broad AI adoption is near-universal. Roughly 72% of organizations use generative AI tools like ChatGPT, Gemini, or Claude for content drafting, FAQ resolution, and summarization. When TA teams say they are "using AI," this is usually what they mean: recruiters writing job descriptions faster, coordinators cleaning up interview notes, hiring managers getting feedback summaries before debriefs.

These gains are real. They are also the starting line, not the destination.

Only 23-25% of organizations are actively trialing or scaling AI agents, and the 11-14% who have reached production readiness are running fully autonomous workflows, not polished chatbot experiments. The 62% stuck in what researchers call "Pilot Purgatory" are experimenting with AI tools and generating scattered productivity wins, but they have not integrated those tools into core operational workflows at scale.

The IBM Institute for Business Value puts numbers to the gap: organizations with formal AI value frameworks average 55% ROI. Those without, average 5.9%. The tools are largely the same. The difference is governance discipline, data readiness, and the decision to treat AI as a core strategic capability rather than a collection of productivity shortcuts.

For TA leaders, that framing clarifies the question. The issue is not whether your team is using AI. The issue is whether you have built the infrastructure for AI to actually change how work gets done.

The Three Stages of Agentic AI Adoption in Recruiting

The 2026 Agentic TA Operations Blueprint examined three enterprise organizations at different points on the adoption curve. Their experiences show what each stage looks like in practice and what separates teams that scale from teams that stall.

Stage 1: Chatbot-Assisted

A 6,000-person tech company with enterprise licenses for ChatGPT, Gemini, and Claude represents this stage. Close to 100% of the organization uses AI in some capacity, and recruiting is one of the heaviest adopters.

The team built custom agents for job description writing, feeding the system their job architecture and internal HR documentation so it generates JDs that match their standards consistently. A second agent takes the JD output and generates interview questions tailored to each role. Recruiters also use AI daily to consolidate interview notes and prep structured summaries for hiring manager debriefs.

JD creation that previously took days due to multi-stakeholder approval chains now happens in a fraction of that time. The time savings are genuine and the adoption is high. Every task still requires manual prompting, and no work happens autonomously in the background.

That is the defining characteristic of Stage 1: AI makes your individual tasks faster, but coordinators and recruiters still drive every step of the workflow.

Stage 2: Transitioning to Agentic

A mid-size data company with roughly 500 employees has moved beyond basic chat into custom agents that reference internal knowledge and connect to real systems.

Their most visible project is an alignment guide agent. Every open role requires an alignment guide, and hiring managers historically resisted creating them. The team built an agent that references all past alignment guides stored in Google Drive and uses that historical data to generate a first draft for any new role. The result reflects how the company has actually hired before, tailored to the specific position, and ready for human review.

A recruiting coordinator on the team went further: she used Gemini to write code for an automated visitor check-in system for on-site interviews. No engineering support was involved. She prompted her way through the build and deployed a system that pings the right person when a candidate arrives. The primary barriers to going further are token costs and systems integration complexity.

Stage 3: Autonomous

A recruiting coordination lead at a mid-size consumer brand has built fully autonomous workflows that run every morning without human prompting.

Using Claude Cowork, the system generates a daily executive interview capacity report that lists every senior leader's interview load for the week, flags anyone approaching their weekly maximum, and identifies which executives have flexibility for additional scheduling. A separate workflow monitors pipeline health across all open requisitions, reads Slack conversations from hiring channels, surfaces candidate drop-off patterns, and flags roles where delays may be causing withdrawals. Both are delivered to Slack every morning.

"It does the things I don't have time to do," the coordinator noted in our research. "It takes over in 10 minutes what would take me hours to look at and go through all 47 open reqs."

When autonomous systems handle the daily operational scan, coordinators start the day already informed and positioned to act on what matters. That is the qualitative shift Stage 3 produces: from reactive calendar management to proactive strategic oversight.

Which Recruiting Workflows Are Best Suited for Agentic AI

Not every part of the hiring funnel delivers the same return on agentic automation. The 2026 Agentic TA Operations Blueprint maps five stages of the hiring funnel against their AI automation potential and measured outcomes.

Sourcing and outreach sees 44% higher response rates and 62% fewer profiles reviewed when agents handle semantic matching and initial outreach, while human recruiters focus on relationship-building and brand storytelling.

Screening produces a 75% reduction in screening labor and 40% higher shortlist quality when agents evaluate technical assessments autonomously, with human review reserved for contextual and cultural fit decisions.

Coordination is where the gap between chatbot and agentic is largest and most measurable. Scheduling costs drop from $15-25 per hire to under $1 per hire when agents handle calendar access, self-scheduling logic, and conflict resolution. Response time compresses from 7-18 hours on average to under 2 minutes.

Feedback collection sees an 11-point eNPS increase when agents handle automated pulse surveys and FAQ resolution, freeing recruiters for strategic career conversations.

Onboarding moves toward a 5-day productivity target when agents handle end-to-end provisioning and document management, with human attention focused on cultural immersion and team integration.

Coordination sits at the center of most successful agentic TA implementations because it is the highest-volume, most repetitive, and most measurable coordination task in the hiring process. It is also where breakdowns have the most visible downstream consequences for candidate experience and hiring velocity.

The 2026 Agentic AI Blueprint For Talent Leaders

Discover how enterprise TA teams are moving from basic chatbots to autonomous hiring agents with real data from 257,000+ scheduling signals and case studies from teams already operating at Stage 3 autonomy.

How to Build Your Agentic AI Adoption Roadmap

The Blueprint's three-phase framework reflects how the enterprise organizations in our research actually progressed, not an idealized sequence. Teams that skip phases typically hit the same wall: automation running on a broken process foundation.

Phase 1: Standardize (Now)

Secure enterprise licenses for the major LLMs so your team works within governed environments. Build custom agents for the highest-volume repetitive tasks: job description writing, interview question generation, and feedback consolidation. Train these agents on your company's tone of voice, job architecture, and inclusive language standards.

The goal at Phase 1 is not transformation. The goal is standardization. The work you do here becomes the foundation for agentic workflows later. Teams that skip this phase and jump directly to autonomous systems typically find that agents produce inconsistent outputs because the inputs were never standardized in the first place.

Phase 2: Connect (Next 6 Months)

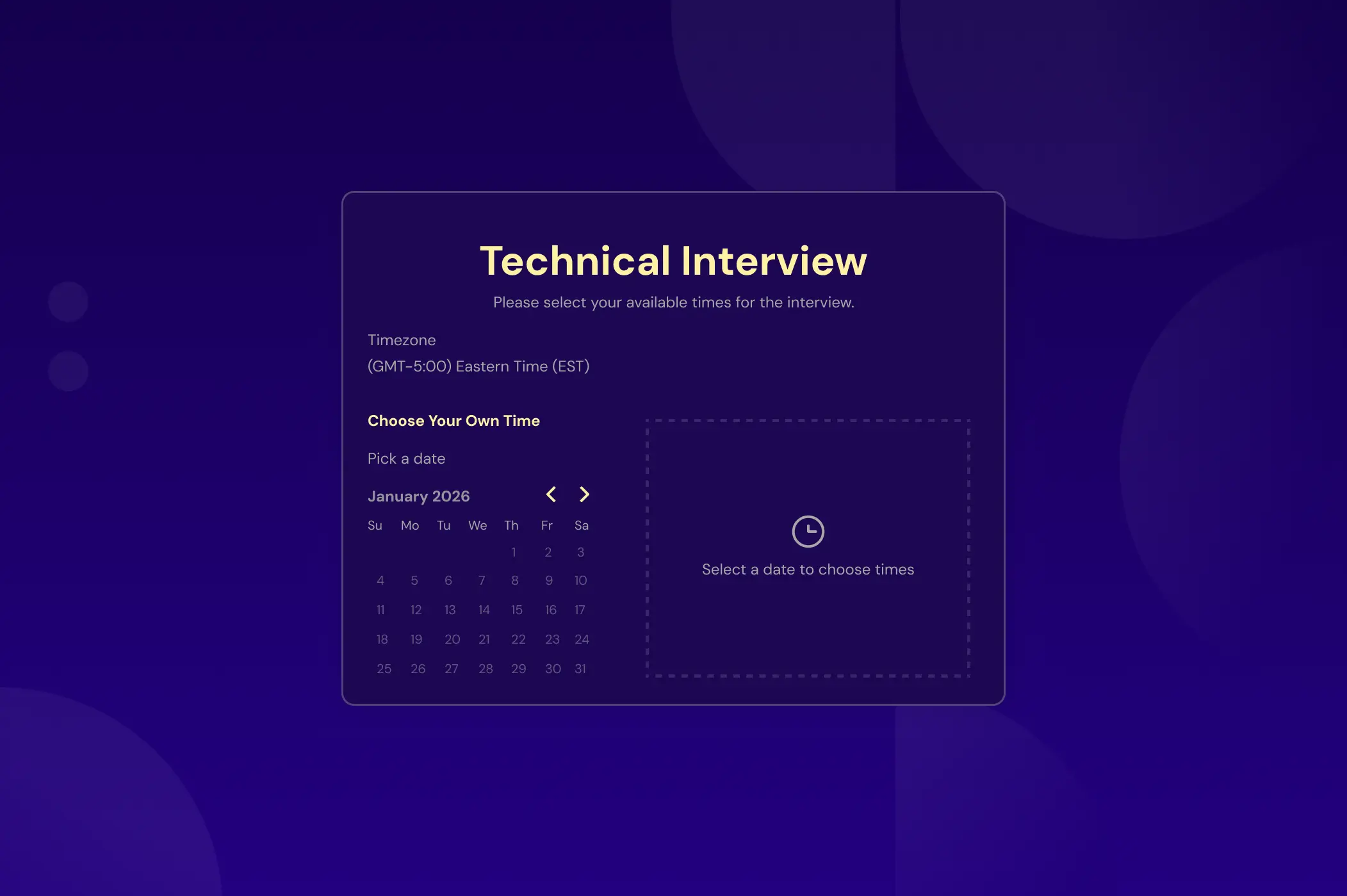

Connect your tools and build agents that operate across systems. Build agents that reference historical documents: past alignment guides, previous job descriptions, pipeline data. Pilot autonomous scheduling through self-service candidate portals that respect interviewer availability, time zones, and panel requirements. Begin monitoring how LLMs describe your employer brand to candidates.

This is where purpose-built coordination platforms become critical. Platforms like candidate.fyi provide the scheduling infrastructure, ATS integration, and candidate communication layer that allow TA teams to deploy custom agents for each area of their hiring process without building connectivity from scratch. Teams at Phase 2 can plug into an existing coordination layer that handles interviewer pooling, calendar intelligence, pulse feedback, and real-time dashboard visibility.

Address token cost governance at this stage. Set usage boundaries, monitor consumption, and establish clear ownership over which agents access which systems. The mid-size data company in our research cited escalating token costs as the primary barrier to progressing further. Getting ahead of that problem at Phase 2 prevents it from stalling Phase 3.

Phase 3: Autonomize (12+ Months)

Deploy fully autonomous coordination agents that run scheduled workflows without human prompting. Daily capacity reports delivered to Slack, pipeline health alerts that surface bottlenecks before candidate drop-off, and interviewer load balancing that prevents burnout.

Shift recruiting coordinator focus from calendar management into strategic advisory roles. Implement pulse feedback at every interview stage. Build real-time dashboards that surface problems early, before they lead to ghosting or withdrawal.

The transition from Phase 2 to Phase 3 requires the most significant change management investment. Coordinators must trust that agents will handle the operational scan reliably enough that they can stop checking manually. Hiring managers must accept that candidate scheduling decisions happen without their direct involvement at each step. Those trust questions are not technical problems. They are organizational ones.

What Agentic AI ROI Looks Like in Practice

The ROI case for agentic coordination is cleaner than in most areas of the business because the inputs and outputs are measurable.

Manual scheduling costs 243 minutes per interview. Agent-assisted self-scheduling takes 27 minutes, a 9x improvement. That difference scales quickly at enterprise hiring volume. A team running 100 interviews per week recovers roughly 360 coordinator hours per month from scheduling automation alone.

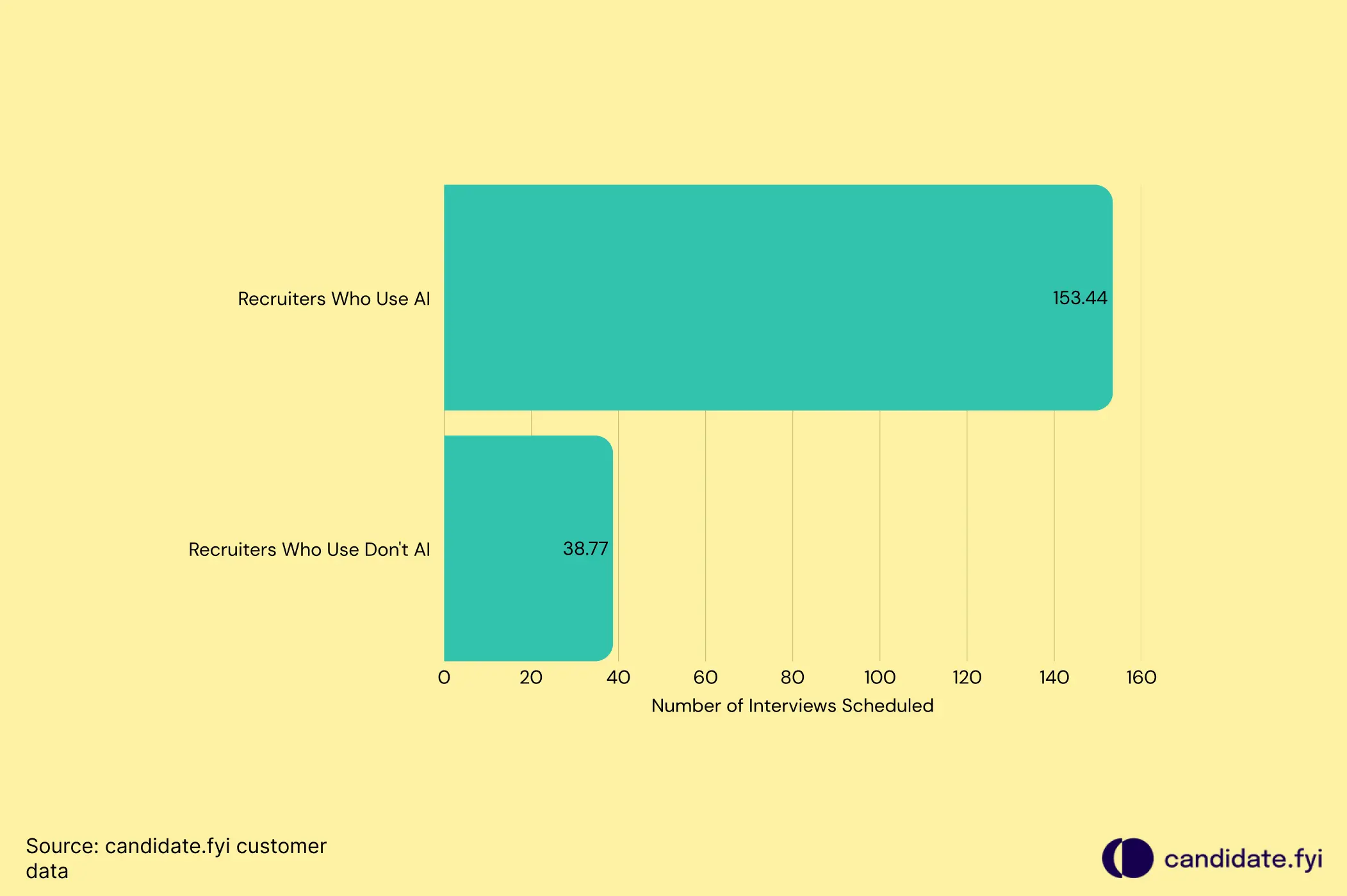

The capacity unlock confirms the magnitude: candidate.fyi's platform data shows that AI-enabled coordinators handle 158 interviews per week, versus roughly 30 for a manually-operated coordinator. With 46% of coordination tasks handled autonomously by AI agents and 26% handled through candidate self-service, coordinators focus their time on the 28% that requires human judgment.

At a business level, organizations utilizing agentic scheduling were 1.6 times more likely to achieve near-perfect hiring goal attainment. The connection between coordination velocity and downstream hiring outcomes is direct.

Relativity Space shows what this looks like in a specific organization. In their first month on candidate.fyi, they averaged 2.8 days to schedule interviews. Halfway through month two, that had dropped to 16.2 hours, a 76% improvement. Cory O'Brien, TA leader at Relativity Space, shared the progression as it was happening: "In our first month, we averaged 2.8 days to schedule interviews. Now, just halfway through month two, we've reduced that to 16.2 hours."

The operational improvement did not require a rebuilt process or an extended implementation. It required the right coordination infrastructure.

The Governance Risks Most TA Teams Aren't Planning For

Gartner predicts that over 40% of agentic AI projects will be canceled by the end of 2027. The failures are rarely about the technology itself.

Data foundations are the most common starting point for trouble. Agents need clean, real-time data to function reliably. When data lives in silos or requires manual entry, agents become prone to loops and hallucinations that erode team trust faster than any productivity gain can rebuild it.

Escalating token costs are the second barrier. Consumption-based pricing means a single power user or a poorly scoped agent can generate significant expenses without guardrails. One TA operations leader in our research described a team member whose AI usage cost the company $150,000 in a single month. The context was that the person was doing the work of five engineers. The point is that consumption without governance produces unpredictable costs.

Governance gaps form the third category. Agents operate with delegated authority and dynamic data access patterns that traditional security models were not designed for. Autonomous agents introduce new categories of risk, including context misinterpretation and cascading errors, where a mistake made by one agent propagates across the entire automated workflow. The EU AI Act and emerging industry-specific standards now demand transparency and accountability for AI-driven decisions. 55% of organizations reported being unprepared for these emerging regulations by 2025.

The organizations succeeding with agentic systems implement graduated autonomy rather than deploying full independence from day one. The approach moves through four tiers: shadow mode, where the agent suggests options and humans retain control; supervised autonomy, where the agent prepares actions for human approval; guided autonomy, where the agent acts within predefined boundaries; and full autonomy, where the agent executes end-to-end and humans intervene only by exception. That progression is not cautious. It is how you build the organizational trust that makes Stage 3 sustainable.

From Pilot to Autonomous: The Metrics That Show You're Progressing

Most teams track time-to-hire and miss the leading indicators that predict whether their agentic AI investment is actually working. These metrics tell the fuller story.

Autonomy rate measures what percentage of coordination tasks AI handles without human intervention. At Stage 1, this is effectively zero. At Stage 3, candidate.fyi's platform data shows 46% of all coordination tasks handled autonomously. Track this quarterly to see whether adoption is deepening or plateauing.

Scheduling cycle time measures the elapsed time from interview request to confirmed invite. The benchmark gap between manual and automated is 243 minutes versus 27 minutes. If your cycle time is not moving toward the automated benchmark, the automation is not working as intended.

Coordinator capacity measures interviews managed per coordinator per week. Manual teams average 20-30. AI-enabled teams reach 80-100 at Level 4 maturity and 158 at Level 5. If capacity is not expanding as automation increases, the productivity gains are being absorbed by process friction rather than converted into additional volume.

Decline response time measures how long it takes from an interviewer declining to a replacement being confirmed. Without automation, interviewers sit on declines for nearly 3 days (68 hours). With autonomous agents proactively engaging alternatives, that drops to 21 hours. The faster a "no" becomes a resolution, the less damage each decline does to candidate momentum.

Candidate satisfaction at automated touchpoints reveals whether speed is coming at the cost of experience. Self-scheduled recruiter screens on candidate.fyi's platform score 4.63 out of 5, the highest satisfaction score across all interview types. Speed and candidate experience are not in tension when the automation is designed well.

These five metrics together give you a clear picture of where your agentic AI investment is generating real operational change and where it is still theoretical.

Q&A

What is agentic AI for HR and how does it differ from standard recruiting automation?

Agentic AI for HR refers to autonomous systems that take a high-level goal, break it into sequential steps, access multiple systems (ATS, calendars, communication tools), and execute without requiring a human prompt at each stage. Standard recruiting automation handles individual, predefined tasks, like sending a confirmation email or populating an ATS field. Agentic systems reason through dependencies, manage exceptions, and initiate work on their own. The operational difference is whether a human is still driving the workflow or whether the system manages the workflow and humans govern outcomes.

How long does it take for a TA team to move from chatbot AI to agentic AI?

Based on the enterprise organizations in the 2026 Agentic TA Operations Blueprint, Phase 1 standardization work (custom agents for JD writing, feedback consolidation, interview prep) can be completed in weeks with existing enterprise LLM licenses. Phase 2 connectivity work, where agents access multiple systems and autonomous scheduling begins, typically takes 3-6 months. Full Phase 3 autonomy, with scheduled workflows running without human prompting, is a 12+ month horizon for most teams. Teams that rush this sequence and skip foundational process work consistently struggle to scale.

What recruiting workflows deliver the fastest ROI from agentic AI?

Interview coordination delivers the fastest and most measurable ROI because the before and after metrics are concrete. Manual scheduling takes 243 minutes per interview; agentic self-scheduling takes 27 minutes. Scheduling costs drop from $15-25 per hire to under $1. Coordinator capacity jumps from 20-30 interviews per week to 80-100+ at full automation. Sourcing and screening deliver strong returns as well (75% reduction in screening labor, 44% higher outreach response rates), but the coordination ROI is easiest to measure and fastest to realize because the baseline metrics are already tracked by most enterprise teams.

What governance risks should TA leaders plan for before deploying agentic AI?

Three risks account for most agentic AI project failures. Data foundation gaps cause agents to produce unreliable outputs when the underlying data is siloed or requires manual entry. Escalating token costs create unpredictable expenses without usage boundaries and governance oversight. Governance gaps around delegated authority and decision traceability create compliance exposure, particularly as the EU AI Act and emerging industry standards require transparency for AI-driven hiring decisions. The mitigation framework is graduated autonomy: starting in shadow mode, moving to supervised and guided autonomy, and reaching full autonomy only after organizational trust has been established at each prior stage.

More Articles

Candidate Self-Scheduling Reduces Time-to-Interview by Two Days

Candidate self-scheduling reduces time-to-interview from 5.9 to 3.9 days and scores 4.63/5 in satisfaction. Here's the data on why it outperforms availability requests.

Introducing AI Query Insights

The fastest way for talent teams to get answers from their recruiting data without digging, dashboards, or delays.

-p-800%252520(6).webp)

The candidate portal: the newest addition to the tech stack

Discover the transformative impact of the Candidate Portal in our latest blog post. Learn how this tool revolutionizes hiring by providing centralized, real-time access to interview schedules, company info, and more, enhancing candidate engagement and streamlining communication. Find out how seamless integration with ATS and continuous feedback optimization can elevate your recruitment process.